AI is becoming part of everyday work. It helps write content, review decisions, and support teams across many areas. On the surface, it looks like everything is working well. But underneath, small problems can build up over time.

The issue is not just about accuracy or performance. It is about how people and AI relate to each other. When that relationship is unclear or inconsistent, trust starts to weaken. This is what we call relational coherence debt.

You can think of it like hidden strain in a system. At first, it is barely noticeable. But over time, it creates confusion, mistakes, and unexpected outcomes.

This shows up in simple ways. Teams may rely on AI too much without checking it properly. Others may ignore it completely because they do not trust it. In some cases, no one is sure who is responsible when something goes wrong.

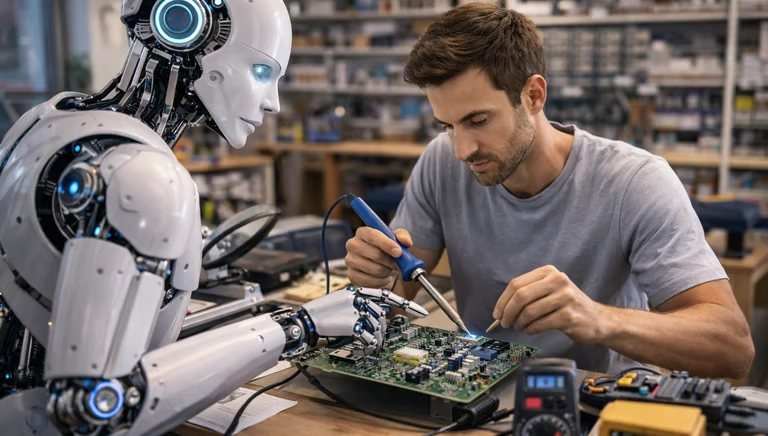

One of the main causes is treating AI as both a tool and a partner at the same time. When the system speaks like a teammate but is managed like a basic tool, people receive mixed signals. This leads to poor judgement and unclear accountability.

The solution starts with clarity.

Define what role the AI is playing. Is it supporting, advising, or acting within limits? Make this clear to everyone using it.

Next, track how people are interacting with the system. Look for patterns like over reliance, repeated corrections, or confusion around decisions. These are early warning signs.

Finally, design the system so it supports clear relationships. This means setting boundaries, keeping records of interactions, and making it easy to understand how decisions are made.

The goal is simple. Build AI systems that people can rely on without confusion. When relationships are clear, performance improves and risk is reduced.